How does ZFS save my bacon and lets me sleep comfortably? (Part 2)

Utilizing ZFS for safely storing data in a home office – Bianor’s QA engineer Deyan Kostov shares his experience in building a secure home setup.

If you have missed the first part of the article, please click here >>>

The testing machine(s)

It turned out I don’t need two! A Mac Mini 2019 is a decent testing machine, I use Chrome and Safari for my browser rotation. Windows is virtualized on the primary host as all good Windows machines should. Having an easy ability to do backups, snapshots, and revert when the OS becomes messed up with time, is the only way to handle Windows and remain sane. These mandatory unattended updates that break stuff are an embarrassment and resulted in class-action lawsuits. Stability in Windows has gotten better but is still bad. So, from the Mac, I remote into the Windows VM and use it for Edge, Chrome, and Firefox. There are five working days in a week and five browser/OS combinations that we support. Coincidence? I think not.

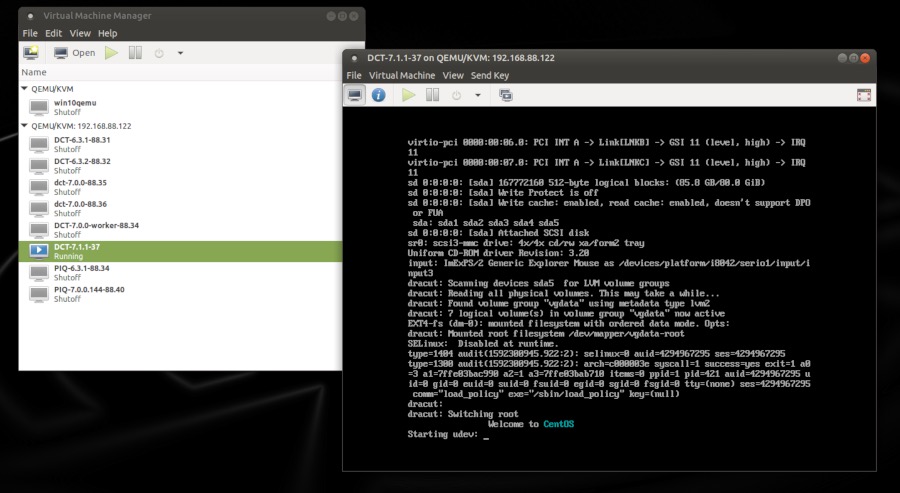

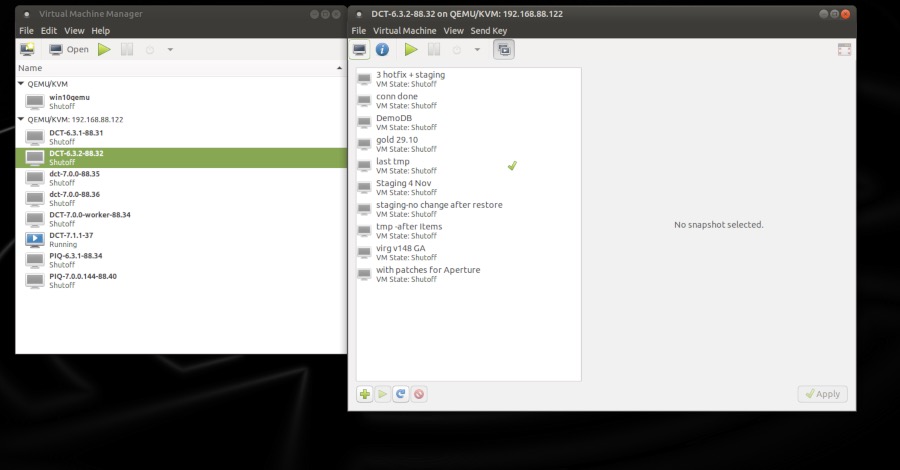

The virtualization host

The Ryzen family of AMD CPUs took care of the CPU cores. 8 (16 threads) are enough for now. More are easily available in the newer 3000 series. 64GB RAM matches the cores nicely. For more, I’ll have to change the motherboard to something more server-ish. Any modern Linux supports KVM virtualization, and it is one of our officially supported platforms, so I just installed Linux and VM Manager. It can be running on any Linux machine and remotely administer other hosts as well.

The storage

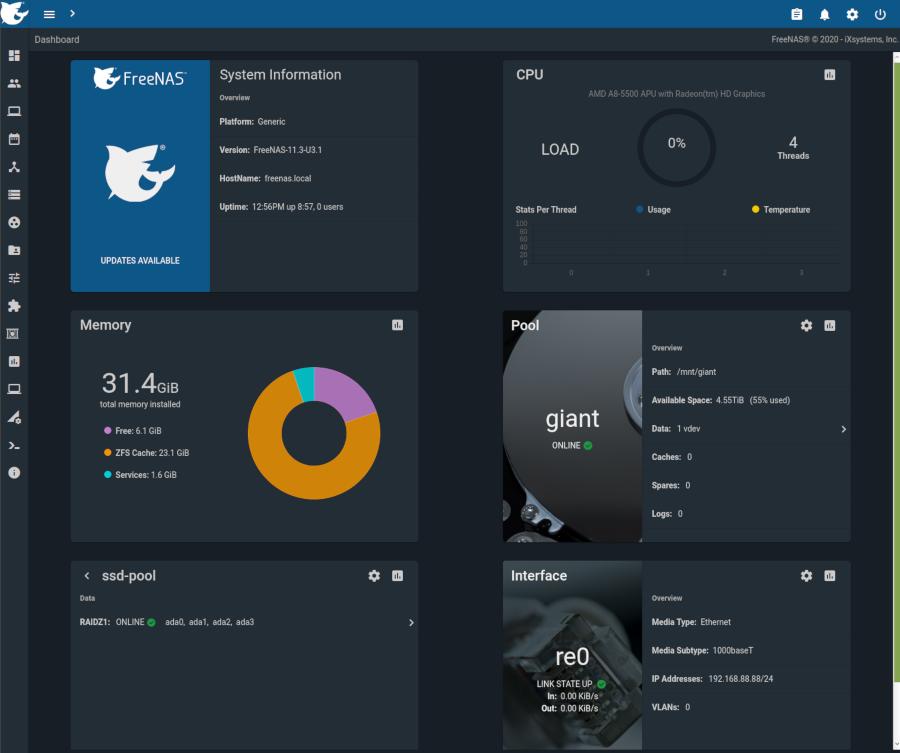

The most popular storage solution for home/small business use is FreeNAS. Works on commodity hardware well, so I repurposed an old desktop, by cramming as much RAM and disks as it would take. Turned out, 32GB, and 8 disks (with an expansion card for the SATA links). Here is how its dashboard looks on load:

I had a 5GB drive and got three second-hand 4TB HDDs with surprisingly low use in their previous servers. Those made a 12TB RAID-5 array. I also had eight old data center SSDs with almost no wear on them, 1.6GB each. Intel MLC enterprise SSDs – good stuff. So I put four of them in the NAS and four in the KVM host, to make two RAID-5 arrays of 4TB each. And all of these arrays are done with ZFS.

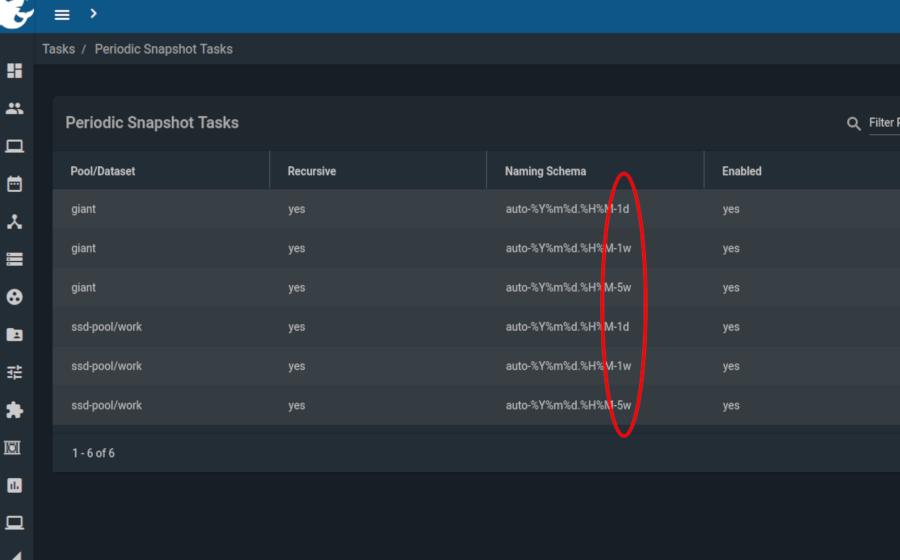

On the NAS, I keep my current work and the customer data encrypted. On the KVM host, I keep the VMs. All of that is snapshotted and backed up (replicated) between the two machines. I hold seven daily snapshots, five weeklies, and the monthly snapshots up until I have less than 20% space left. Once a month, I make a backup on a 12TB external drive that I keep in another location. ZFS is smart enough to do incremental backups. I can make hourly snapshots if I want. It takes no CPU resources, just space. But the replication every hour will slow down my network. It’s better to copy at night.

So why go through all this? How ZFS saves my bacon?

1. Reverting back to a version of my docs is a matter of minutes to mount that snapshot as a read-only share. This has saved my behind more times than I am willing to admit.

2. The testing machine is replaceable if it breaks. Any other computer can remote into the Windows VM, and I will lose only Safari. I can work on the host directly because it has a Desktop Environment installed (Ubuntu Mate, since you asked).

3. Reverting to an old version of FreeNAS after a bad update is built in the system – “boot environments.” So far, it has not happened, but I’m ready if it does. Reverting the Linux host to an older version after a bad update is also built-in Ubuntu since last year – ZFS is now an installation option on new machines. Before, it required some work to set it up. In this April’s LTS version, a snapshot is done automatically before packages upgrade.

4. If an HDD or SSD fails – the machines keep running until I replace it in a few days. “RAID is not a backup “blah-blah… I know. It’s nice to have it as the first line of defense.

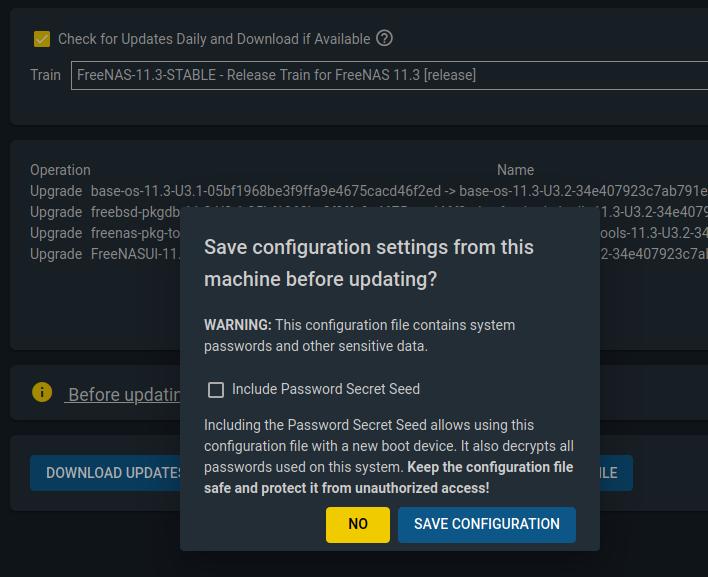

5. If the NAS motherboard fails, or something catastrophic like that – I’ll pull the disks and slap them in another FreeNAS machine. The configuration of users, shares, permissions, etc. is in one file, that I encrypt and back up. It is generated on every update, and then the system switches to Gandalf mode, asking me to “Keep it secret. Keep it safe.”

6. If the whole NAS burns down, the SSD data is replicated to the KVM host nightly. I have to make it writable and shareable, not just a read-only copy. Takes minutes. The HDD data is stored on the external disk. I may lose some archives there for up to a month. But the current state is preserved.

7. The same scenarios count for the KVM host – its data is replicated to the NAS nightly. I have to lose both machines completely, to lose up to a month of work. At that point, something terrible has happened to my apartment, and I’m probably having other severe issues. Some downtime is unavoidable.

My data is checked with hashes every time it is read. Periodically, “scrubbing” jobs check everything. Looking at the logs, the HDDs are lying to me all the time, but ZFS auto-corrects the errors. This makes me sleep easy. Every hardware part is replaceable. What keeps it together is the magic dust of Linux, Free BSD, and ZFS with its snapshots, hashing, encryption, and replication. Soon to be Linux and ZFS only – a new version of FreeNAS was announced just last week, to be based on Linux, not Free BSD.

What can you do to take advantage of ZFS?

Are there alternatives?

Other precautions?

UPS

To start from the precautions, use a UPS! Find a cheap old “professional” unit and replace the batteries with new – that will make the whole thing work like new. A little more expensive new UPS device of a reputable brand will do the job too. That saves your valuable equipment from lightning strikes and unstable electricity supply. When the lights suddenly go off, and your computers don’t, you’ll thank me. Don’t call me right away, save your stuff first and gracefully shut down all those machines draining your UPS.

NAS

If you have a home NAS already, pat yourself on the shoulder and skip the paragraph. If not, consider some of the ready-made NAS appliances that exist aplenty. The most reputable and popular are Synology (uses btrfs) and Qnap (uses ZFS). Adding them to your network is easy. Setup a few shares, a snapshot scheme, and you are done. Keep your important stuff there and in one more place. NAS appliances are great home multimedia streaming centers with a clean web interface. You can manage them from your phone. Cameras can be attached to make your own home surveillance system that does not send your data to Jeff Bezos or Xi Jinping. Seriously, why don’t you have a NAS at home yet?

BACKUPS

BACKUPS – if some data is not on at least two independent storage places, it might as well be gone. The essential stuff should be on 3. This will save your bacon someday, guaranteed.

Btrfs

Btrfs has earned a lot of ridicule on the Net, but recently it has cleaned up its image. Facebook used it extensively for years with loads that I can’t even imagine, and their engineers have fixed a lot of rough edges. OpenSUSE Linux uses it by default and has “boot environments.” Meaning, if an update of the OS or a package breaks something, you can go to the older snapshot of the system, with the older kernel/library/whatever… So if you don’t like Ubuntu and/or ZFS, try OpenSUSE with btrfs. It has hashing, snapshots, all good stuff. Let me know how it goes.

Virtualize

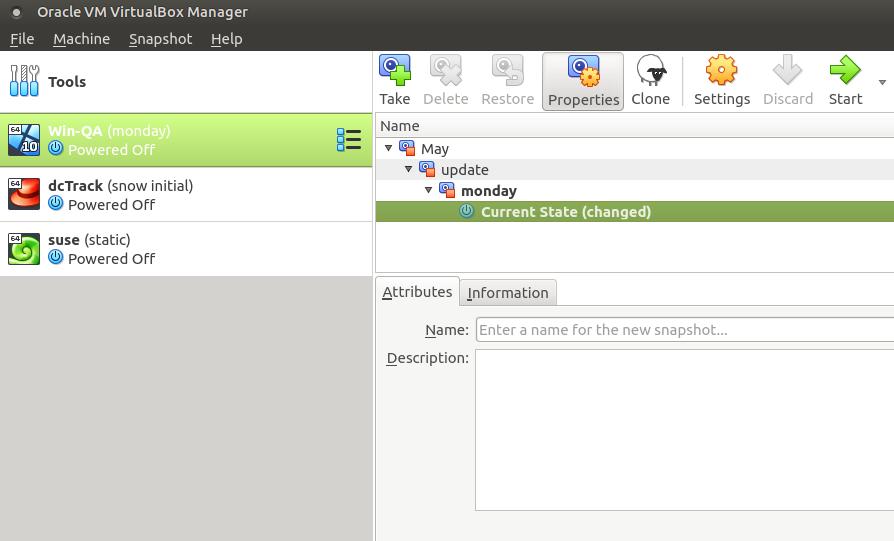

Please virtualize Windows! Even if you are using Windows as your primary OS, there are many ways to make a virtual instance and do most of your work there. VirtualBox is perhaps the easiest. In that way, you can back it up, snapshot it, use it and abuse it, break it to its last legs, and at the end of the day just revert to the morning snapshot. Computers have only 2 states – “broken” and “just about to break.” When you internalize this in your process, you will achieve enlightenment. Omm….

Thank you for reading this long blog post! Comments, suggestions and offers to pay my electricity bill are welcome. Recommendations to move to “The Cloud” will be ignored or laughed at.

——————————

Deyan Kostov is a senior QA engineer at Bianor. He has spent most of his IT career as a small business owner – sysadmin/support engineer – fixing problems and maintaining systems operational. Five years ago, Deyan decided that the weekend and late-night calls to reanimate broken servers are no longer hipster enough for him. So he switched sides and is now finding problems and cracking systems that other people have to fix. He firmly believes that’s how Karma works. If you disagree, fight him in Slack chat.

Recently he applied to be a volunteer beta tester of Elon Musk’s space Internet company – Starlink. Fingers crossed. There are not too many applicants from his geographical region. Finding “Bugs in Spaaaaace” will be mega-hipster!